#6 | How does Linear layer act on multi-dimensional tensor ?

Sounds trivial for 1D, but how about a 3D tensor (or any *D tensor) ?

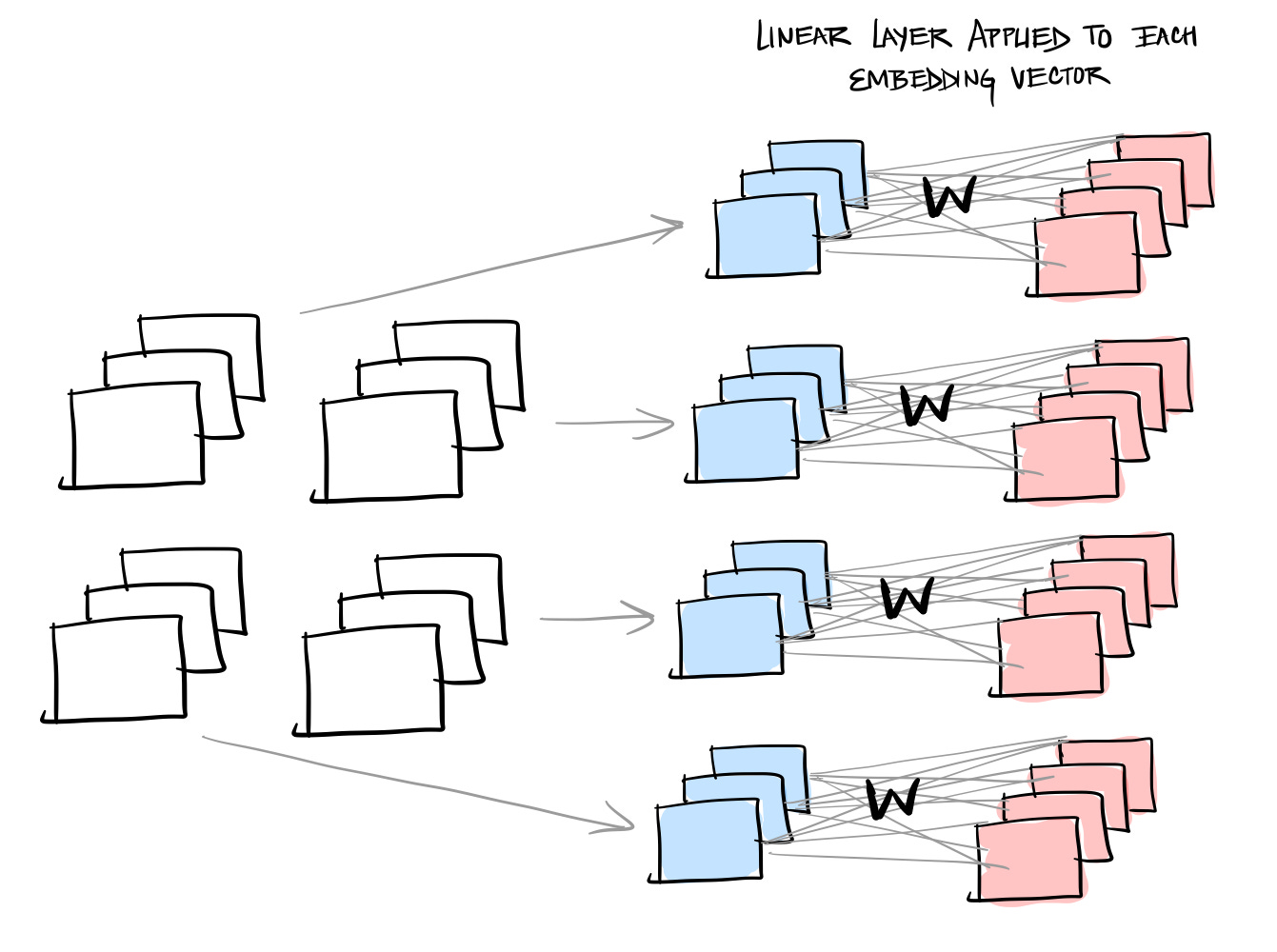

We understand the essence of Linear Layer via the below imagery:

Input is a vector of 3 elements, which when transformed by W, gives the Output vector of 4 elements. W is the matrix that gets initialized and learned as part of this Linear layer once the training is done.

But how about the transformation on a 3D tensor:

In the context of NLP, a typical input matrix has 3 dimensions corresponding to the Batch, Sequence length and Embedding size.

In such cases,

the Linear layer acts on the last dimension (embedding dimension) of the input matrix, transforming just the embeddings from one size to another

It means that, in the above example, think of the 2x2x3 matrix as a collection of 4 (2*2) vectors, each of size 3.

The linear layer then applies the transformation to each of these 4 vectors as shown below. Each vector is transformed from size 3 to size 4.

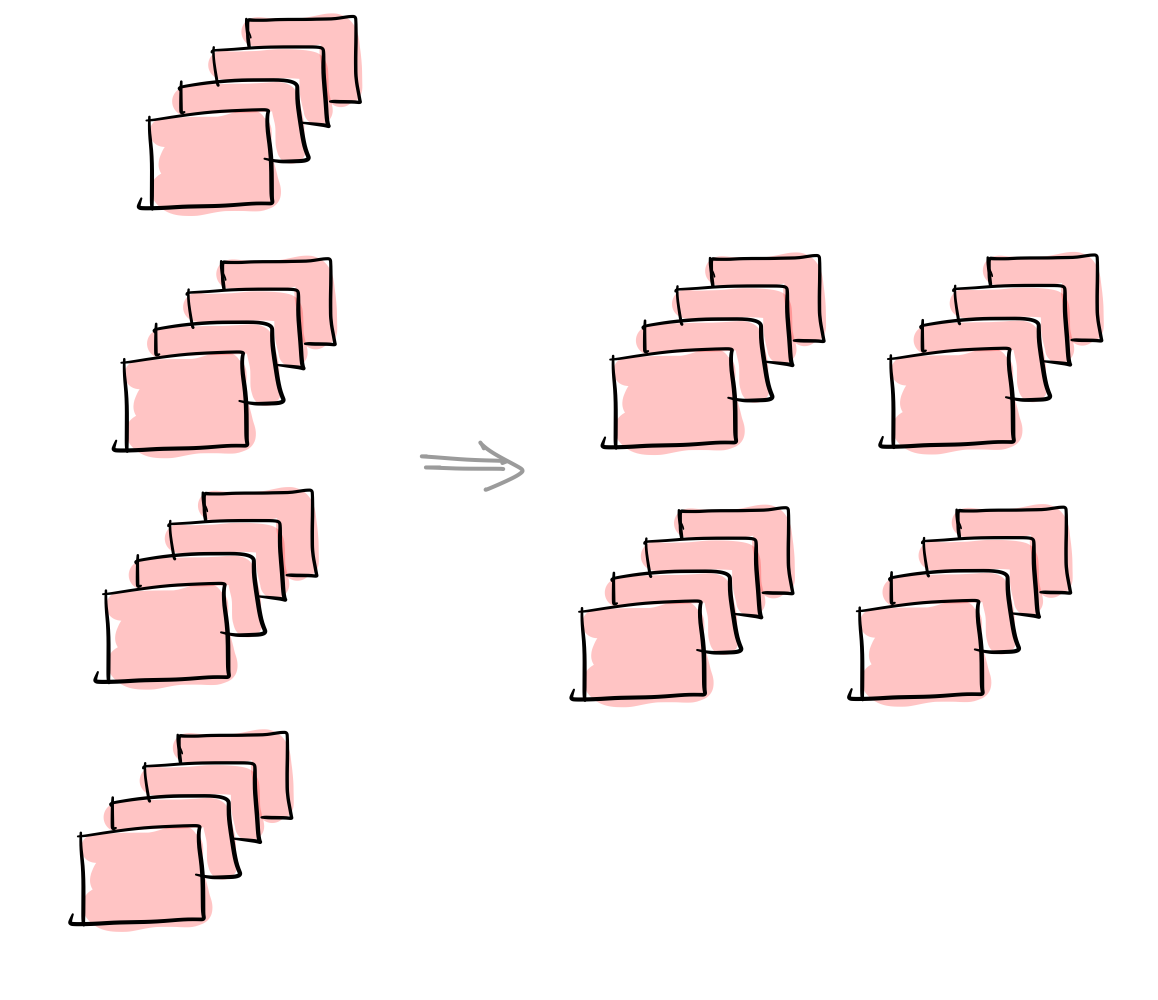

Think of the transformed vectors rolling back to form the original matrix:

So the overall transformation looks like:

The nn.Linear API in Pytorch mentions in_features and out_features which refers to the shape of the last dimension (of the input and output matrices)

In our example: in_features (D) → 3 and out_features (D') → 4

Summary:

It doesn’t matter what the shape of the input matrix is. Its just the last dimension that gets transformed