#12 | MIPS vs MCSS (Maximum Inner Product / Cosine Similarity Search)

And the one that matters !!

You must have come across this popular problem statement:

The “similarity” here depends on the similarity metric we are trying to use - it can be “inner product” which is the same as the dot product or “cosine similarity”.

Just a reminder of the differences between the two:

The former is the projection of one vector over another but the latter is the angle between the two vectors.

Now - if we plan to use inner product as the similarity metric - the problem statement is referred to as Maximum Inner Product Search (MIPS), or mathematically:

But if it is cosine similarity then Maximum Cosine Similarity Search (MCSS)

That’s it - just a fancy name for a non-fancy problem statement.

Note that:

Where exactly are these used?

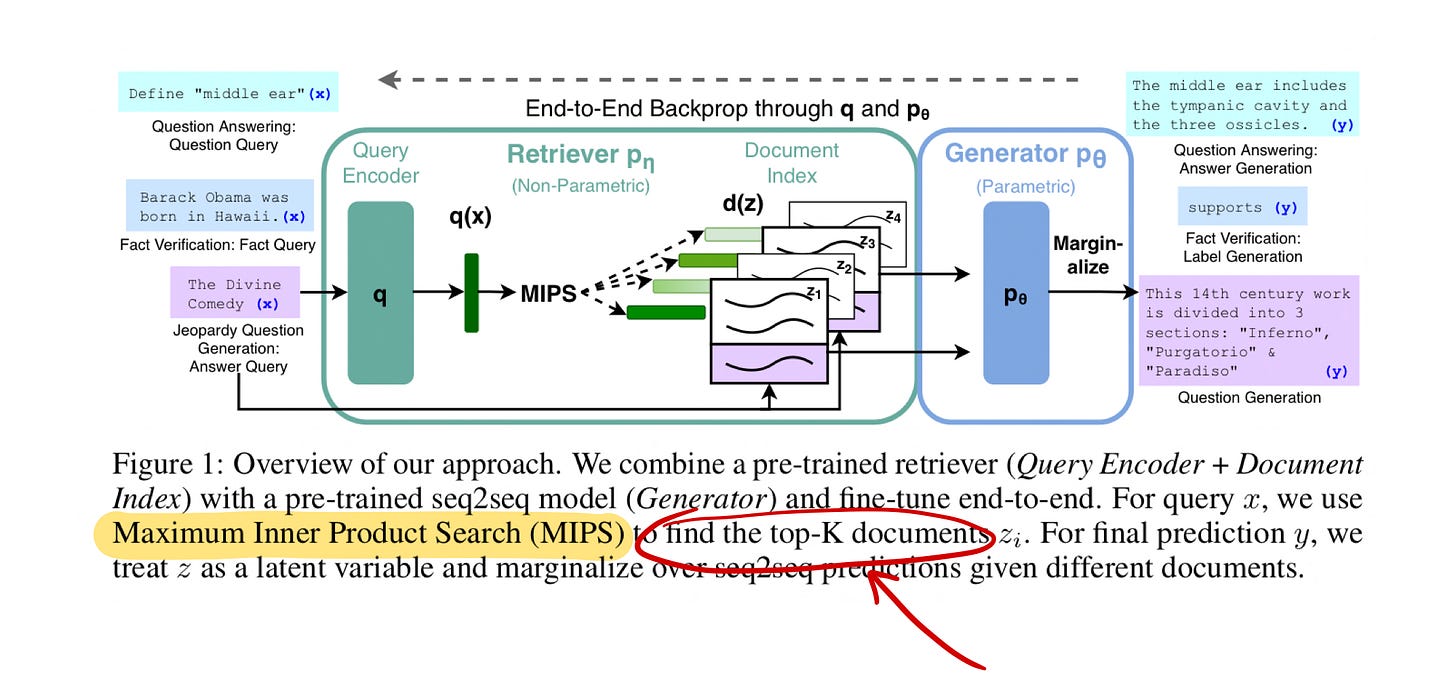

Below is one example paper reference so that you can understand the context in which these are mentioned:

Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks

(This is the original paper behind the popular RAG framework)

That’s it - to find the top-k documents. To generalize - these are used in recommendation engines and text retrieval scenarios where the idea is to get the most similar/closest vector/item from the corpus.

When to use MIPS vs MCSS?

MIPS is sensitive to vector magnitude whereas MCSS is not. So cases where vector magnitude matters - go for MIPS otherwise MCSS.

Let’s take an example of doc retrieval where each doc is represented using a vector of word counts. If the doc is long - it will give us a high magnitude vector because of larger word counts. This might not be a desirable behavior and we would want to normalize the doc vectors by their magnitudes - hence MCSS makes sense here.

How do we solve it?

A brute force way would be to go over all the vectors one by one, calculate the similarity metric, and get the closest one.

The problem arises if the number of vectors is large - and this is precisely where the Approximate Nearest Neighbors (ANN) approach can help us. You can go through the below Short to grasp the main idea behind this technique.

ANN will give you the approximate candidates with efficient time complexity. Once we have the candidates we can do simple brute force MIPS / MCSS to get the final closest / most similar vector.

That’s it for MIPS/MCSS. Until next time 👋🏼

But before that - a small quiz: